1. INTRODUCTION

Citations to previous research is an essential standard of science to give credit to scholars and to integrate results to advance knowledge. The development of indexing of scientific publications, particularly computerized electronic database services that emphasize the tracking and analysis of citations to publications, are increasingly influential in modern research. Garfield (2006) summarized the development of systematic tracking of article citations for use with the Journal Impact Factor (JIF) and the Science Citation Index, which has become the Web of ScienceTM and Journal Citation ReportsTM (Clarivate) bibliometric services. The JIF was developed for librarians to manage holdings with limited financial resources but has been co-opted by many as an indicator of vaguely defined journal and article quality.

The speed of knowledge advancement continues to increase with technological advances in computer-assisted electronic publication and indexing available through the Internet. The fast, hypercompetitive world of grants and publications pressure some researchers to cut corners, illogically ‘judging books by their covers’ in evaluating research reports by the journal where they are published, indexed, or appear in the JIF. This misuse of citation metrics such as the JIF has been conclusively refuted and criticized for several reasons including skewed distributions and disciplinary differences in citation patterns (Adler et al., 2009; Bollen et al., 2006; Declaration on Research Assessment [DORA], n.d.; Hicks et al., 2015; Kurmis, 2003; Seglen, 1997; Smith, 1998).

Nevertheless, there has been a rapid proliferation of scientific journals (Ghasemi et al., 2022; Pandita & Singh, 2023) and metrics to evaluate journals, articles, and authors (Casadevall & Fang, 2014; Roldan-Valadez et al., 2019; Wilsdon et al., 2015). Interest in journal metrics like the JIF for ranking journals has been described as fetishistic, foolish, mindless, insidious misuse, and an obsession (Adler et al., 2009; Hicks et al., 2015; Hussain, 2015; Osterloh & Frey, 2020; Tourish & Willmott, 2015; Willmott, 2011). Ranking journals using citation metrics result in imprecise and contradictory rankings of “core” or “top-tier” journal status (Knudson, 2024a; 2024c; Knudson et al., 2024; Mason & Singh, 2022; Pajić, 2015; Vȋiu & Păunescu, 2021; Wilsdon et al., 2015). The numerous metrics, database sources for data, vague and conflicting definitions of publication quality dimensions, and several meta-science fields (bibliometrics, informetrics, and scientometrics) contribute to considerable miscommunication and conflicting results.

Progress in journal citation metric research is hampered by miscommunication because of a lack of agreement on research/scholarly quality constructs and inconsistent use of terminology (Mason & Singh, 2022). Many terms used are not defined (Egghe, 2022) and are used in conflicting and in synonymous fashion (Aksnes et al., 2019; Helmer et al., 2020; Knudson et al., 2024), including activity, contribution, influence, impact, importance, merit, output, progress, popularity, progress, quality, rigor, usage, and visibility. Despite the terminology chaos, the consensus of the meta-science literature is that while journal metrics are positively associated with each other, they tend to serve as imprecise proxies for one of two constructs: usage/impact or prestige (Bollen et al., 2006; 2009; Franceschet, 2010; Leydesdorff et al., 2016; Zhou et al., 2012). Knudson et al. (2024, p. 95) defined these constructs: “Usage is the archive role of journals in providing transitory, shorter-term observations at the knowledge front (sometimes referred to as popularity). Prestige represents the perception of advancement and codification of knowledge.” Many scholars are not familiar with this two-factor structure and evidence-based interpretation of citation metrics.

It is well known from analysis of citations content and context that citation behavior is multidimensional, varies in function and polarity, and differs across disciplines (Tahamtan & Bornmann, 2019). Disciplines also have widely varying numbers and timings of citations (Hayashi & Fujigaki, 1999; Seglen, 1997; Waltman, 2016). Nuanced use and interpretation of citation data allow study of knowledge flow and connections between disciplines (van Leeuwen & Tijssen, 2000; Wang et al., 2025). Misuse of citation metrics, however, often disadvantage interdisciplinary fields, journals, and research given their diversity and variation in citation patterns.

This issue can be particularly problematic in interdisciplinary fields such as kinesiology and biomechanics with widely varying citation patterns (Knudson, 2023; 2024b; Knudson et al., 2024). For example, biomechanics scholars may be disadvantaged in a tenure and promotion review by publishing in a specialized biomechanics journal with a low JIF, incorrectly perceived by faculty as having low quality and prestige, when this metric is aligned with usage that is relatively low because of journal size and specialization, despite the journal being the most relevant and respected in that research area. Grant or institutional evaluations focusing incorrectly on journal usage metrics like JIF may disadvantage some interdisciplinary journals and research if the topics are not currently popular, attracting many citations in the short (2-year) time window of the predominant JIF.

1.1. Citation Metrics in the Interdisciplinary Field of Biomechanics

Biomechanics is a diverse, interdisciplinary field with varying citation patterns and centrality of journals in which scholars publish (Knudson, 2023; 2024b). Journal evaluation and selection by authors in the interdisciplinary field of biomechanics is complicated by journal mission and other factors. Biomechanics include the integration of biological and physical sciences, many kinds of animal and plant motion, and numerous areas of application (allied health, engineering, ergonomics, medicine, sports). These factors may contribute to wide variation in journal citation metrics across areas of biomechanics application (Knudson, 2014; 2015a; 2015b; 2023; 2024b). These studies report that the top 10 to 50 cited articles in biomechanics journals have median citations between 40 to 360 in Google Scholar and 8 to 158 citations in Web of ScienceTM over two or more years.

This variation in citations to biomechanics journals is consistent with variation in citations to different research subject categories assigned to biomechanics journals (Knudson, 2023). Khademi et al. (2023) reported that word analysis of Iranian biomechanics articles indexed in Web of ScienceTM also favored the injury research categories of tissue biomechanics and orthopedics, over the categories of body movements, computational, occupational and spine, and corneal biomechanics. The inconsistency in biomechanics journal metrics and subject categories agrees with evidence that the American Society of Biomechanics (ASB) members’ perceptions of journal quality vary by their own research interest area in the society (Knudson & Chow, 2008).

A recent study documented the change in the centrality of the citation network of top cited biomechanics journals according to citations indexed in Journal Citation ReportsTM from 2011 to 2021 using the “Journal Citation Relationships” report (Knudson, 2024b). This study found that the number of top journals cited in twelve seed biomechanics journals in Journal Citation ReportsTM decreased 17% despite a five-fold increase in citations, indicating a considerable change and concentration of top journals cited in the field. Specifically, over the last decade mega-journals increased from being one to eight of the top 25 cited journals in biomechanics. The top ten journals at the center of the biomechanics citation network in 2021 (based on two-year JIF as a proxy for overall academic usage) included five mega-journals (*): Journal of Biomechanics, Gait & Posture, Sensors*, Scientific Reports*, Journal of the Mechanical Behavior of Biomedical Materials, Applied Sciences*, Frontiers in Bioengineering and Biotechnology*, PLoS ONE*, Sports Biomechanics, and Journal of Biomechanical Engineering. Planning and publishing biomechanics research may be adversely influenced by the misuse of citation metrics in indexing, searching for and evaluating research, and in selecting journal outlets for reports.

1.2. Purpose

This study extends knowledge of bias in citation patterns to top cited biomechanics journals related to the kinesiology/exercise science field by documenting journal citation metrics from three database services. It was hypothesized that top cited biomechanics journals would have variable citations and journal citation metrics. Appropriate interpretation of these metrics is illustrated and recommendations made to avoid their misuse in biomechanics and similar interdisciplinary fields.

2. METHODOLOGY

The Journal of Biomechanics and seven other top cited biomechanics journals related to kinesiology were selected as a sample. Biomechanics journals related to kinesiology were selected because this field is also interdisciplinary and is one of the five categories (a.k.a. “exercise and sports science”) that can be identified by ASB members (ASB, n.d.). The present author, a scholar in both fields, selected these eight journals using the results of a previous study of the top 30 cited biomechanics journals (Knudson, 2024b, Table 3, p. 3261) based on the Journal Citation Relationships feature of Journal Citation ReportsTM. The Journal of Biomechanics is particularly relevant given it is the oldest, most respected (Knudson & Chow, 2008) journal in the field, and is most cited and central (Knudson, 2024b). The next top seven cited journals related to kinesiology were selected (Table 1). Journals that may occasionally report research by kinesiology scholars were excluded, primarily multidisciplinary mega-journals (Ioannidis et al., 2023) and journals in other areas of biomechanics (e.g., engineering).

Table 1

Descriptive statistics of indexed citations to the top 20 articles for 2021 by Google Scholar citations for three database services

| Journal | (A) Google Scholar | (B) Web of ScienceTM | (C) Dimensions | |||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| γ | Me | Max | Min | γ | Me | Max | Min | γ | Me | Max | Min | |||

| J Biomech | 2.1 | 70 | 243 | 46 | 2.4 | 45 | 164 | 31 | 2.0 | 52 | 179 | 37 | ||

| Gait Posture | 1.5 | 49 | 127 | 36 | 1.8 | 33 | 83 | 16 | 2.2 | 37 | 107 | 21 | ||

| Sports Biomech | 1.2 | 63 | 117 | 46 | 1.3 | 35 | 76 | 21 | 0.9 | 40 | 77 | 25 | ||

| Clin Biomech | 2.1 | 22 | 54 | 18 | 0.9 | 16 | 35 | 3 | 1.9 | 18 | 47 | 7 | ||

| J App Biomech | 1.2 | 11 | 22 | 10 | 1.9 | 8 | 20 | 4 | 1.6 | 9 | 19 | 5 | ||

| Hum Mov Sci | 1.4 | 33 | 88 | 13 | 1.3 | 19 | 49 | 6 | 1.3 | 23 | 62 | 5 | ||

| J EMG Kines | 2.8 | 22 | 85 | 13 | 1.7 | 15 | 50 | 5 | 3.0 | 16 | 81 | 8 | ||

| J Stren Cond Res | 1.8 | 94 | 242 | 76 | 2.2 | 47 | 129 | 33 | 2.2 | 56 | 143 | 45 | ||

| Mean | 1.7 | 46 | 122 | 33 | 1.7 | 27 | 76 | 15 | 1.9 | 31 | 77 | 19 | ||

Three databases were searched to identify the articles, citations, and citation rates to the top 20 cited articles from the eight biomechanics journals for the same year (2021) as the Knudson (2024b) study: Dimensions, Google Scholar, and Web of ScienceTM. Citations to top percentiles (75 to 95%) of articles published by journals are the focus of meta-science studies because of the strong positive skew and the many uncited articles (Bornmann & Marx, 2014; 2015). Google Scholar totals were acquired March 21, 2025.

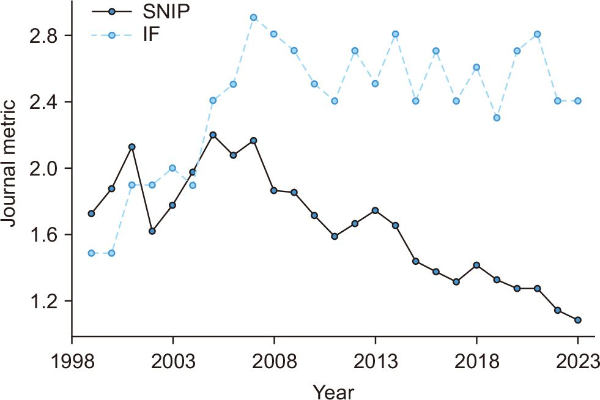

Four common journal citation metrics for the Journal of Biomechanics were also collected for the last five available years (2021-2023) to illustrate short-term, annual variation. These metrics usually align best with the scholarly usage/popularity construct and are based on the two most prestigious databases: ScopusⓇ (Elsevier, Amsterdam, Netherlands) and Web of ScienceTM (ClarivateTM, London, UK) (Pranckutė, 2021). Journal metrics from ScopusⓇ data include the CiteScore (CS4), SCImago Journal Rank (SJR3), and Source-Normalized Impact per Paper (SNIP3). The metric based on Web of ScienceTM data was the two-year Journal Impact Factor (JIF2). Subscripts in metric abbreviations indicate the time window used to analyze citations. Additional historical data (1999-2023) of the JIF2 and SNIP3 for the Journal of Biomechanics were also collected to illustrate long-term trends. This allowed the illustration of differences in general and field-normalized usage metrics. These two metrics had values for this longer timeframe and would show similar trends given the high correlation between all journal usage metrics.

Data were analyzed with JMP Pro 17.2.0 (SAS Institute, Cary, NC, USA) software for statistical analysis. Continuous data were plotted and tested for normality with the Shapiro-Wilk test and calculation of skew. Appropriate descriptive statistics were calculated and reported. The limitation of inferential statistical analysis across time or journals was necessary given the small number of journals in this specialization of biomechanics and their widely varying size.

3. RESULTS AND DISCUSSION

3.1. Citation Variation and Usage

Citations to top cited articles in the biomechanics journals in all databases had large positive skews, with median citations varying between 11 and 94 (Google Scholar), 8 to 47 (Web of ScienceTM), and 9 to 56 (Dimensions). The top values, from Google Scholar and Web of ScienceTM, were within the 8 to 360 citations reported for biomechanics journals (Knudson, 2014; 2015a; 2015b; 2023; 2024b). Converting the citations in Table 1 to citation rates (citations/3 years) shows variation from 2.6 to 3.7 citations per year to the top 20 articles in Journal of Applied Biomechanics, up to 15 to 23.3 citations per year for the Journal of Biomechanics. This confirms past reports of large variation of citation rates (3 to 79 citations/year) across biomechanics journals (Knudson, 2014; 2015a; 2015b; 2023; 2024b). Citation variation across biomechanics journals from different subject categories is also large, calling into question the ability to field normalize research in biomechanics (Knudson, 2023). Strong citations and citation rates to some biomechanics journals is impressive scholarly usage given that medical and sports medicine journals tend to have larger numbers of references, earlier citation, and higher citation rates than most other disciplines like biomechanics (Adler et al., 2009; Dorta-González & Dorta-González, 2013; Finardi, 2014; Knudson, 2013; 2015b; 2022; Patience et al., 2017).

The Journal of Biomechanics consistently had high median citations (45 to 70) compared to other biomechanics journals but was nominally lower in median citations than the Journal of Strength and Conditioning Research (47 to 94) in all three databases (Table 1). This contributed to a higher subsequent 2021 JIF2 for the Journal of Strength and Conditioning Research (4.4) than the Journal of Biomechanics (2.8). A minority of the top 20 cited articles in the Journal of Strength and Conditioning Research, however, relate to biomechanics theory, methods, or variables. The common inference of higher likely usage of a biomechanics article in the Journal of Strength and Conditioning Research from its citations alone from any database or the JIF2 would be misleading given the skew in citations and fewer biomechanics articles in the journal. The Journal of Biomechanics was ranked 65th of 98 journals by JIF2 in the engineering, biomedical ‘category’ while the Journal of Strength and Conditioning Research was ranked 18th of 88 journals by JIF in the sports sciences category in 2021. The Journal Citation ReportsTM currently classifies the Journal of Strength and Conditioning Research into the Sports Sciences (n=127) category and the Journal of Biomechanics into the Biophysics (n=77) and Engineering, Biomedical (n=123) categories. Comparing these journals would not be appropriate with the JIF2 from this database given the journals cover different disciplines of science and the journals ranked vary over time.

Table 2 lists above average short-term (2019-2023) usage values for the Journal of Biomechanics based on four journal citation metrics. The journal currently ranks 133rd of 400 journals by CS4 and 101st out of 326 by SJR3 in the biomedical engineering ‘subject area’ of ScopusⓇ for 2023. The short-term relative variation (coefficient of variation [CV] between 7 and 16%) of this top journal was slightly more consistent than the five-year variation (CV=12-21%) reported for five metrics from fourteen biomechanics journals (Knudson & Quimby, 2023). Year-to-year variation greater than 10% to 30% (Amin & Mabe, 2003; Haghdoost et al., 2014; Knudson & Quimby, 2023; Ogden & Bartley, 2008) and statistical tests (Bornmann, 2017; Schubert & Glänzel, 1983) have been advocated as a standard for meaningful change in most journal citation metrics. A scholar interpreting these recent metrics should note that the journal has slightly above (1.1 to 1.4) average field-normalized usage (1.0) according to SNIP3, with more average general usage based on CS4, JIF2, and SJR3. This should be interpreted as confirmatory evidence of likely usage of articles published in the journal. Qualifications include that these metrics are biased from the positive skew of citations to articles in journals, and so median values are most appropriate for comparison, and these are tentative proxies for scholarly usage, not rigor or prestige.

Table 2

Recent variation in four major journal citation metrics for the Journal of Biomechanics

| CS4 | SJR3 | SNIP3 | JIF2 | |

|---|---|---|---|---|

| Year | ||||

| 2019 | 4.7 | 1.0 | 1.3 | 2.3 |

| 2020 | 4.3 | 0.8 | 1.3 | 2.7 |

| 2021 | 4.4 | 0.8 | 1.3 | 2.8 |

| 2022 | 4.9 | 0.7 | 1.2 | 2.4 |

| 2023 | 5.1 | 0.7 | 1.1 | 2.4 |

| Value | ||||

| Mean | 4.68 | 0.80 | 1.24 | 2.52 |

| SD | 0.33 | 0.12 | 0.09 | 0.22 |

| CV (%) | 7.15 | 15.52 | 7.21 | 8.60 |

| Upper 95% CI | 5.10 | 0.95 | 1.35 | 2.79 |

| Lower 95% CI | 4.26 | 0.65 | 1.13 | 2.25 |

3.2. Long-Term Usage and Evidence-Based Interpretation

Fig. 1 illustrates long-term, opposing trends of the SNIP3 and JIF2 usage metrics for the Journal of Biomechanics, beginning in the early 2000s. This example illustrates the different information that can be obtained between a general journal usage metric and field-normalized metric. How should these usage results be interpreted by biomechanists interested in this journal as a publication outlet? Biomechanists should first review meta-science research to know that the average citation rate represented by JIF2 has more bias (positive skew of citations, short time window, and no normalization for disciplinary citation patterns) than the SNIP3, and so it is hard to interpret the magnitude of the JIF2 proxy estimate of overall scholarly usage based on the Web of ScienceTM database service. The SNIP3 has a longer time window and field normalization, and so it may serve as a proxy estimate of field (albeit interdisciplinary) usage from a larger database (ScopusⓇ). Second, any change in these metrics should be interpreted based on year-to-year variation (Table 2) and the 20-30% change needed in most metrics to be meaningful for biomechanics journals (Knudson & Quimby, 2023). Interpreting the size of journal metric values also requires consideration of mediating factors. For example, like many journal metrics, one cannot calculate SNIP3 on one’s own because the ScopusⓇ subject area used is unclear, given the journal currently has four (biomedical engineering, biophysics, orthopedics and sports medicine, and rehabilitation). The database currently classifies the journal into two subject areas similar to Journal Citation ReportsTM research areas but while covering more journals: Biophysics (n=225) and Engineering, Biomedical (n=400).

The SNIP3 data in Fig. 1 indicates there has been a gradual decline in ‘field’ usage of average articles in the Journal of Biomechanics from about twice the usage to about average (1.1). Given the size of this drop is about 50%, there may be a meaningful decrease in field-specific usage of the Journal of Biomechanics articles beginning after 2007 based on SNIP3. This result is consistent with the change in citation density in biomechanics journals to include several more mega-journals than in the past (Knudson, 2024b). The apparent decline of the Journal of Biomechanics in field, however, provides no information on journal or article quality and could be the result of indexing bias, subject area variation, an artifact of shifting citation patterns in biomechanics (Knudson, 2024b), and the recent rapid growth of biomedical articles published in multi-disciplinary and specialized mega-journals (Ioannidis et al., 2023; Siler et al., 2020). Further research is needed in improving transparency and consistency of subject categories and field-normalization for disciplinary and interdisciplinary fields and journals.

The overall usage of average Journal of Biomechanics articles based on JIF2 and Journal Citation ReportsTM, however, has increased from 1.5 to 2.5, approaching earlier values (Fig. 1). Zadpoor and Nikooyan (2011) reported increases in the JIF2 of 14 biomechanics journals from 2003 until 2010. They also noted, however, that the rate of increase in JIF2 in biomechanics journals was less than for journals in other Journal Citation ReportsTM subject categories (e.g., biomedical, biomaterials). While this increase in overall usage beginning in the early 2000s is larger than the 30% standard, are there other factors to consider? One is a long history of increases in the JIF (Althouse et al., 2009) with recent increases from 1.1 in 1997 to 2.2 in 2016 (Fischer & Steiger, 2018). This indicates that the increase in typical overall scholarly usage of Journal of Biomechanics articles results from both the research topics published in the journal, growth in articles published by the journal (521-613 articles published from 1999 until 2005 to consistently over 1,200 after 2008), and general JIF temporal inflation. The scholarly audit culture that overemphasizes the JIF likely leads to the metrics’ inflation from increasing numbers of journals, the articles they publish, the number of references cited per article, and unethical manipulation (Ioannidis & Thombs, 2019).

4. RECOMMENDATIONS

Given the results of this study and the well documented limitations of numerous citation metrics and databases cited in this report, biomechanics researchers and scholars in similar interdisciplinary fields should consider four strategies to limit bias in planning and publishing their research. Consistent use of these strategies by scholars in biomechanics could contribute to other research quality initiatives (Glasziou et al., 2014; Ioannidis, 2005; Knudson, 2017; Vagenas et al., 2018) for more meaningful results and faster advancement of knowledge. DORA (2024) and other sources (DORA, n.d.; Hicks et al., 2015; Walters, 2017; Wilsdon et al., 2015) also provide helpful recommendations on avoiding misuse of citation metrics in research.

4.1. Recognize and Avoid Misuse of Journal Citation Metrics

Biomechanics researchers and journal editors should recognize, avoid, and not reward misuse of citation metrics at all levels: article, author, and journal. They should recognize there is compelling evidence that single, journal-level citation metrics are strongly biased, inaccurate for individual articles, and associate poorly with expert, peer evaluation of research quality (Haddawy et al., 2016; Mahmood, 2017; Thelwall et al., 2023). They should avoid using any single citation metric as a precise, ratio-level measure of any specific journal quality construct. They should evaluate research reports on their own merits and not reward misuse of citation metrics, assuming the reports are better or worse based on unreliable ranks or tiers from citation metrics. In addition, it is important to consider a more public stand by considering personal and support organizational (journal, society) endorsement of the Declaration on Research Assessment or DORA (n.d.).

4.2. Recognize Citation Metric Bias in Biomechanics and Interdisciplinary Fields

Biomechanists should recognize that there is bias in all journal citation metrics, and that some metrics disadvantage interdisciplinary journals (e.g., biomechanics) relative to journals that are larger, multidisciplinary, from faster moving disciplines (e.g., biomedical, computer science, genetics), or mega-journals. High-quality interdisciplinary research is difficult and takes time, so relatively lower journal citation metrics in some biomechanics journals is expected and may not be related to lower quality, usage/impact, or prestige. Biomechanics research is interdisciplinary and diverse, and so citation patterns vary significantly in different research areas or database subject categories assigned, leading to additional bias in journal citation metrics. Interdisciplinary researchers should normally seek first to publish in journals relevant to their research topic to reach the scholarly audience, and not chase false visibility and usage in presumed high-impact journals.

4.3. Use Meta-Science for Evidence-Based Interpretation of Citation Metrics

Biomechanists should strive to use evidence-based interpretation of multiple citation metrics based on the consensus of meta-science research. Citation metrics should be used primarily at article and author level, not the journal level. If journal metrics are required by some institutional policy, it is best to strive to use multiple citation metrics only as confirmatory evidence about the likely usage or prestige expected for a particular journal in the context of that metric and databases’ limitations. One should strive to use field normalized citation metrics when possible and interpret them carefully, especially given interdisciplinary fields like biomechanics. This is particularly important given the variability in citation patterns across disciplines and within biomechanics, and because different databases use different subject categories and lack a specific biomechanics category. Importantly, do not infer and imply in a communication that any journal citation metric is a proxy for the quality of a research report appearing in a journal (Dougherty & Horne, 2022; Haddawy et al., 2016; Mahmood, 2017; Starbuck, 2005; Thelwall et al., 2023).

4.4. Use Multiple and Thorough Database Searches

Biomechanics and all researchers should strive to use numerous, careful searches of multiple databases to adequately find relevant research. Given the diversity and interdisciplinary nature of biomechanics, research in the field appears in a wide variety of journals. Biomechanics knowledge advances the most when all relevant research is synthesized in planning original research and systematic review reports. The many biases introduced by large variation in citation patterns in disciplines increasingly influence database coverage, search engines, and relevance ranking of results (Bade, 2007; Delgado-Quirós & Ortega, 2024; 2025; Drivas, 2025; Gusenbauer & Haddaway, 2020; Kiester & Turp, 2022; Knudson, 2022; 2023; Mongeon & Paul-Hus, 2016; Pozsgai et al., 2021).

5. CONCLUSIONS

Eight top cited biomechanics journals related to kinesiology had strong positive skews of top cited articles indexed in three major database services. Short- and long-term longitudinal data for the most prestigious journal in the field, the Journal of Biomechanics (Knudson, 2024a; Knudson & Chow, 2008) illustrated the need for evidence-based interpretation of journal citation metrics for correct interpretation, avoiding biases that adversely affect diverse, slower, interdisciplinary fields. Variation in four journal usage metrics for the Journal of Biomechanics indicated different and meaningful long-term trends over time between overall (JIF2) and field-normalized (SNIP3) metrics. The high prestige of the journal is not reflected in general and commonly misused JIF2 usage metrics. This is a good example of bias in journal metrics, particularly for interdisciplinary journals, and of the resulting problems from oversimplification and misuse of journal metrics and their lack of evidence-based interpretation in disciplinary and interdisciplinary contexts.

REFERENCES

, , (2009) Citation: Statistics a report from the International Mathematical Union (IMU) in cooperation with the International Council of Industrial and Applied Mathematics (ICIAM) and the Institute of Mathematical Statistics (IMS) Statistical Science, 24, 1-14 https://doi.org/10.1214/09-STS285.

, , (2019) Citations, citation indicators, and research quality: An overview of basic concepts and theories SAGE Open, 9, 2158244019829575 https://doi.org/10.1177/2158244019829575.

, , , (2009) Differences in impact factor across fields and over time Journal of the American Society for Information Science and Technology, 60, 27-34 https://doi.org/10.1002/asi.20936.

American Society of Biomechanics (ASB) (n.d.) About https://asbweb.org/about/

(2007) Relevance ranking is not relevance ranking or, when the user is not the user, the search results are not search results Online Information Review, 31, 831-844 https://doi.org/10.1108/14684520710841793.

, , (2006) Journal status Scientometrics, 69, 669-687 https://doi.org/10.1007/s11192-006-0176-z.

, , , (2009) A principal component analysis of 39 scientific impact measures PLoS ONE, 4, e6022 https://doi.org/10.1371/journal.pone.0006022. Article Id (pmcid)

(2017) Confidence intervals for Journal Impact Factors Scientometrics, 111, 1869-1871 https://doi.org/10.1007/s11192-017-2365-3.

, (2014) How to evaluate individual researchers working in the natural and life sciences meaningfully? A proposal of methods based on percentiles of citations Scientometrics, 98, 487-509 https://doi.org/10.1007/s11192-013-1161-y.

, (2015) Methods for the generation of normalized citation impact scores in bibliometrics: Which method best reflects the judgements of experts? Journal of Informetrics, 9, 408-418 https://doi.org/10.1016/j.joi.2015.01.006.

, (2014) Causes for the persistence of impact factor mania mBio, 5 https://doi.org/10.1128/mbio.00064-14. Article Id (pmcid)

Declaration on Research Assessment (DORA) (2024) Guidance on the responsible use of quantitative indicators in research assessment DORA. https://doi.org/10.5281/zenodo.10979644

Declaration on Research Assessment (DORA) (n.d.) San Francisco declaration on research assessment https://sfdora.org/read/

, (2024) Completeness degree of publication metadata in eight free-access scholarly databases Quantitative Science Studies, 5, 31-49 https://doi.org/10.1162/qss_a_00286.

, (2025) Citation counts and inclusion of references in seven free-access scholarly databases: A comparative analysis Journal of Informetrics, 19, 101618 https://doi.org/10.1016/j.joi.2024.101618.

, (2013) Comparing journals from different fields of science and social science through a JCR subject categories normalized impact factor Scientometrics, 95, 645-672 https://doi.org/10.1007/s11192-012-0929-9.

, (2022) Citation counts and Journal Impact Factors do not capture some indicators of research quality in the behavioural and brain sciences Royal Society Open Science, 9, 220334 https://doi.org/10.1098/rsos.220334. Article Id (pmcid)

(2025) The role of online search platforms in scientific diffusion Journal of the Association for Information Science and Technology, 76, 580-603 https://doi.org/10.1002/asi.24959.

(2022) Impact measures: What are they? Scientometrics, 127, 385-406 https://doi.org/10.1007/s11192-021-04053-3.

(2014) On the time evolution of received citations, in different scientific fields: An empirical study Journal of Informetrics, 8, 13-24 https://doi.org/10.1016/j.joi.2013.10.003.

, (2018) Dynamics of Journal Impact Factors and limits to their inflation Journal of Scholarly Publishing, 50, 26-36 https://doi.org/10.3138/jsp.50.1.06.

(2010) The difference between popularity and prestige in the sciences and in the social sciences: A bibliometric analysis Journal of Informetrics, 4, 55-63 https://doi.org/10.1016/j.joi.2009.08.001.

(2006) The history and meaning of the Journal Impact Factor JAMA, 295, 90-93 https://doi.org/10.1001/jama.295.1.90.

, , , (2022) Scientific publishing in biomedicine: A brief history of scientific journals International Journal of Endocrinology and Metabolism, 21, e131812 https://doi.org/10.5812/ijem-131812. Article Id (pmcid)

, , , , , , , , (2014) Reducing waste from incomplete or unusable reports of biomedical research The Lancet, 383, 267-276 https://doi.org/10.1016/S0140-6736(13)62228-X.

, (2020) Which academic search systems are suitable for systematic reviews or meta-analyses? Evaluating retrieval qualities of Google Scholar, PubMed, and 26 other resources Research Synthesis Methods, 11, 181-217 https://doi.org/10.1002/jrsm.1378. Article Id (pmcid)

, , , (2016) A comprehensive examination of the relation of three citation-based journal metrics to expert judgment of journal quality Journal of Informetrics, 10, 162-173 https://doi.org/10.1016/j.joi.2015.12.005.

, , (2014) How variable are the journal impact measures? Online Information Review, 38, 723-737 https://doi.org/10.1108/oir-05-2014-0102.

, (1999) Differences in knowledge production between disciplines based on analysis of paper styles and citation patterns Scientometrics, 46, 73-86 https://doi.org/10.1007/BF02766296.

, , (2020) What is meaningful research and how should we measure it? Scientometrics, 125, 153-169 https://doi.org/10.1007/s11192-020-03649-5.

, , , , (2015) Bibliometrics: The Leiden Manifesto for research metrics Nature, 520, 429-431 https://doi.org/10.1038/520429a.

(2015) Journal list fetishism and the 'sign of 4' in the ABS guide: A question of trust? Organization, 22, 119-138 https://doi.org/10.1177/1350508413506763.

(2005) Why most published research findings are false PLOS Medicine, 2, e124 https://doi.org/10.1371/journal.pmed.0020124. Article Id (pmcid)

, , (2023) The rapid growth of mega-journals: Threats and opportunities JAMA, 329, 1253-1254 https://doi.org/10.1001/jama.2023.3212.

, (2019) A user's guide to inflated and manipulated impact factors European Journal of Clinical Investigation, 49, e13151 https://doi.org/10.1111/eci.13151.

, , (2023) Analyzing and mapping the scientific structure of Iran's biomechanics documents using the co-occurrence analysis Caspian Journal of Scientometrics, 10, 15 https://doi.org/10.22088/cjs.10.2.15.

, (2022) Artificial intelligence behind the scenes: PubMed's best match algorithm Journal of the Medical Library Association, 110, 15-22 https://doi.org/10.5195/jmla.2022.1236. Article Id (pmcid)

(2013) Impact and prestige of kinesiology-related journals Comprehensive Psychology, 2 https://doi.org/10.2466/50.17.Cp.2.13.

(2015a) Citation rate of highly-cited papers in 100 kinesiology-related journals Measurement in Physical Education and Exercise Science, 19, 44-50 https://doi.org/10.1080/1091367X.2014.988336.

(2017) Confidence crisis of results in biomechanics research Sports Biomechanics, 16, 425-433 https://doi.org/10.1080/14763141.2016.1246603.

(2022) What kinesiology research is most visible to the academic world? Quest, 74, 285-298 https://doi.org/10.1080/00336297.2022.2092880.

(2023) Publication metrics and subject categories of biomechanics journals Journal of Information Science Theory and Practice, 11, 40-50 https://doi.org/10.1633/JISTaP.2023.11.4.3.

(2024a) Are there meaningful prestige metrics of kinesiology-related journals? Measurement in Physical Education and Exercise Science, 28, 316-337 https://doi.org/10.1080/1091367X.2024.2341849.

(2024b) Center of mass change in the biomechanics citation network Sports Biomechanics, 23, 3257-3267 https://doi.org/10.1080/14763141.2023.2287030.

(2024c) Scopus citation metrics for top and bottom quintile kinesiology-related journals International Journal of Kinesiology in Higher Education, 8, 343-354 https://doi.org/10.1080/24711616.2024.2416184.

, , (2024) Synthesis of publication metrics in kinesiology-related journals: Proxies for rigor, usage, and prestige Quest, 76, 93-112 https://doi.org/10.1080/00336297.2023.2237150.

, (2008) North American perception of the prestige of biomechanics serials Gait & Posture, 27, 559-563 https://doi.org/10.1016/j.gaitpost.2007. .

, , , (2016) Citations: Indicators of quality? The impact fallacy Frontiers in Research Metrics and Analytics, 1, Article 1 https://doi.org/10.3389/frma.2016.00001.

(2017) Correlation between perception-based journal rankings and the Journal Impact Factor (JIF): A systematic review and meta-analysis Serials Review, 43, 120-129 https://doi.org/10.1080/00987913.2017.1290483.

, (2022) When a journal is both at the 'top' and the 'bottom': The illogicality of conflating citation-based metrics with quality Scientometrics, 127, 3683-3694 https://doi.org/10.1007/s11192-022-04402-w.

, (2016) The journal coverage of Web of Science and Scopus: A comparative analysis Scientometrics, 106, 213-228 https://doi.org/10.1007/s11192-015-1765-5.

, (2008) The ups and downs of Journal Impact Factors The Annals of Occupational Hygiene, 52, 73-82 https://doi.org/10.1093/annhyg/men002.

, (2020) How to avoid borrowed plumes in academia Research Policy, 49, 103831 https://doi.org/10.1016/j.respol.2019.103831.

(2015) On the stability of citation-based journal rankings Journal of Informetrics, 9, 990-1006 https://doi.org/10.1016/j.joi.2015.08.005.

, (2023) Growth and distribution of research journals across the world Journal of Information Science Theory and Practice, 11, 22-36 https://doi.org/10.1633/JISTaP.2023.11.2.3.

, , , (2017) Citation analysis of scientific categories Heliyon, 3 https://doi.org/10.1016/j.heliyon.2017.e00300. Article Id (pmcid)

, , , , , , , , , , , , , , , (2021) Irreproducibility in searches of scientific literature: A comparative analysis Ecology and Evolution, 11, 14658-14668 https://doi.org/10.1002/ece3.8154. Article Id (pmcid)

(2021) Web of Science (WoS) and Scopus: The titans of bibliographic information in today's academic world Publications, 9, 12 https://doi.org/10.3390/publications9010012.

, , , (2019) Current concepts on bibliometrics: A brief review about impact factor, Eigenfactor score, CiteScore, SCImago Journal Rank, Source-Normalised Impact per Paper, H-index, and alternative metrics Irish Journal of Medical Science (1971-), 188, 939-951 https://doi.org/10.1007/s11845-018-1936-5.

, (1983) Statistical reliability of comparisons based on the citation impact of scientific publications Scientometrics, 5, 59-73 https://doi.org/10.1007/bf02097178.

(1997) Why the impact factor of journals should not be used for evaluating research BMJ, 314, 497 https://doi.org/10.1136/bmj.314.7079.497. Article Id (pmcid)

, , (2020) The diverse niches of megajournals: Specialism within generalism Journal of the Association for Information Science and Technology, 71, 800-816 https://doi.org/10.1002/asi.24299.

(1998) Unscientific practice flourishes in science British Medical Journal, 316, 1036 https://doi.org/10.1136/bmj.316.7137.1036. Article Id (pmcid)

(2005) How much better are the most-prestigious journals? The statistics of academic publication Organization Science, 16, 180-200 https://doi.org/10.1287/orsc.1040.0107.

, (2019) What do citation counts measure? An updated review of studies on citations in scientific documents published between 2006 and 2018 Scientometrics, 121, 1635-1684 https://doi.org/10.1007/s11192-019-03243-4.

, , , , , , (2023) In which fields do higher impact journals publish higher quality articles? Scientometrics, 128, 3915-3933 https://doi.org/10.1007/s11192-023-04735-0.

, (2015) In defiance of folly: Journal rankings, mindless measures and the ABS Guide Critical Perspectives on Accounting, 26, 37-46 https://doi.org/10.1016/j.cpa.2014.02.004.

, , (2018) Thirty-year trends of study design and statistics in applied sports and exercise biomechanics research International Journal of Exercise Science, 11, 239-259 https://doi.org/10.70252/YWWI1479.

, (2000) Interdisciplinary dynamics of modern science: analysis of cross-disciplinary citation flows Research Evaluation, 9, 183-187 https://doi.org/10.3152/147154400781777241.

, (2021) The lack of meaningful boundary differences between Journal Impact Factor quartiles undermines their independent use in research evaluation Scientometrics, 126, 1495-1525 https://doi.org/10.1007/s11192-020-03801-1.

(2017) Citation-based journal rankings: Key questions, metrics, and data sources IEEE Access, 5, 22036-22053 https://doi.org/10.1109/ACCESS.2017.2761400.

(2016) A review of the literature on citation impact indicators Journal of Informetrics, 10, 365-391 https://doi.org/10.1016/j.joi.2016.02.007.

, , , (2025) Measuring knowledge flow in the interdisciplinary field of biosecurity: Full counting method or fractional counting method? Scientometrics, 130, 1101-1128 https://doi.org/10.1007/s11192-025-05249-7.

(2011) Journal list fetishism and the perversion of scholarship: Reactivity and the ABS list Organization, 18, 429-442 https://doi.org/10.1177/1350508411403532.

, , , , , , , , , , , , , , (2015) The metric tide: Report of the independent review of the role of metrics in research assessment and management https://doi.org/10.13140/RG.2.1.4929.1363

, (2011) Publication and citation in biomechanics: A comparison with closely related fields (2003-2010) Journal of Mechanics in Medicine and Biology, 11, 705-711 https://doi.org/10.1142/S0219519411004836.

, , (2012) Quantifying the influence of scientists and their publications: Distinguishing between prestige and popularity New Journal of Physics, 14, 033033 https://doi.org/10.1088/1367-2630/14/3/033033.