1. INTRODUCTION

Recently, artificial intelligence (AI) in education has revolutionised teaching techniques and opened new possibilities for individualised and adaptable learning (Alarefi, 2024). Educational institutions are increasingly using AI-driven solutions such as intelligent tutoring systems, automated grading software, and data-informed learning analytics to boost student engagement, instructional effectiveness, and learning pathways (Cukurova, 2025). AI’s potential benefits in education are well known, but the factors determining students’ acceptance of these technologies, and their academic and cognitive outcomes are examined in limited studies. Understanding these elements is crucial to optimising AI in education while reducing risks like overreliance on automation and ethical problems including data privacy and algorithmic prejudice (Samala et al., 2024).

Previous well established models and theories such as The technology acceptance model (TAM) by Davis (1989) and the unified theory of acceptance and use of technology (UTAUT) by Venkatesh et al. (2003) show that perceived usefulness (PU), ease of use, and social influence strongly influence students’ acceptance of digital learning tools. AI has drawbacks, including algorithmic decision-making, data security concerns, and potential consequences on critical thinking (Mouta et al., 2024). Over-reliance on AI-driven suggestions may reduce students’ problem-solving autonomy and creativity (Roll & Wylie, 2016), although other research show that real-time feedback and personalised information might increase learning efficiency (Chen et al., 2020). AI implementation’s psychological and social effects on student motivation, classroom dynamics, and teacher-student relationships require further study.

Despite growing global interest in AI in education, most of the research has focused on Western and East Asian contexts, leaving many cultural and educational systems unprepared for new technologies (Assaf et al., 2024). This study fills this gap by examining student AI use and its implications in Saudi Arabia, which is undergoing rapid digital change as part of Vision 2030. As part of Vision 2030, Saudi Arabia is investing in AI and digital education to diversify the economy and build a knowledge-based economy (Alarefi, 2023a). The Saudi Ministry of Education has implemented intelligent learning platforms, AI-driven tutoring systems, and digital literacy programs (Alarefi, 2023b). These programs’ success depends on students’ willingness to use AI technologies and their impact on educational performance and cognitive skill development.

In the context of Saudi Arabia, several factors can contribute to the usage of AI, and these based on TAM and UTAUT can be related to the usefulness of the usage as well as the ease of use along with the influence of others, such as other students, teachers, and schools, as well as the community. Students’ self-efficacy is a critical factor that can determine the usage (Ali & Allawi, 2024). However, there are limited studies that have examined the AI usage by students. In Saudi Arabia, despite strong Internet penetration and a tech-savvy youth (Alanzi et al., 2023), legacy educational methods and reluctance to change may limit AI-driven usage for educational purposes (Ghanem & Alshahrani, 2021). Ethical concerns regarding data privacy and AI decision-making may also affect students’ trust in these technologies (AlMulhim, 2023). Understanding these contextual factors is essential for creating AI-driven educational tools that match local values and learning styles.

This research provides Saudi Arabian policymakers and educators with regional views on AI in education to improve the global discussion. This study will identify Saudi students’ AI adoption motivations and assess its academic and cognitive effects, offering evidence-based recommendations for improving AI integration in Saudi classrooms. The findings contribute to ethical and pedagogical discussions about AI in education, ensuring that technology innovation supports sustainable and fair learning. The next sections discuss the literature review followed by the research methodology, findings, discussion, and conclusion.

2. LITERATURE REVIEW

2.1. Theoretical Framework

The theoretical framework of this study is based on three theories, TAM, UTAUT, and social cognitive theory by Bandura (1986). TAM, which was developed by Davis (1989), emphasizes PU and perceived ease of use (PEOU) as key variables in technology acceptance. These include learners’ rational assessments of AI technology’s academic advantages and the justification of the efforts required to use the technology. TAM acknowledges that adoption decisions entail psychological and social elements as well as value judgements. The present study also include the UTAUT model by Venkatesh et al. (2003), which is similar to TAM in focusing on usefulness (performance expectancy) and effort needed (effort expectancy). In addition, UTAUT adds social influence, which is the understanding that peer and instructor expectations drive educational adoption decisions (Simango et al., 2024). A robust theoretical framework for studying AI in education offers several benefits. It integrates AI-related challenges while maintaining strong psychological assumptions. The framework can explain adoption decisions and predict educational outcomes. It also offers practical advice for educators and policymakers using AI tools to maximise educational benefits while reducing students’ fears and psychological barriers to adoption.

2.2. Artificial Intelligence Adoption

Significant academic study has examined the deployment of AI in education and its implications on learning outcomes. This study incorporates existing research to focus on Saudi Arabia’s educational revolution’s unique environment by examining technology adoption theory, AI-specific characteristics, and educational results. TAM, which emphasises PU and PEOU, has dominated research on educational technology adoption (Davis, 1989). Recent meta-analytic study confirms these variables’ continuing importance, accounting for 40% of adoption intentions across educational contexts (Scherer et al., 2019). Integrating social cognitive theory, especially self-efficacy as a mediator, has improved the model. Research shows that students’ technical competence confidence increases PU by 22-35% across instructional technologies (Taherdoost, 2018). In higher education, institutional support systems and peer networks influence up to 28% of adoption decisions (Al-Rahmi et al., 2021). These studies show that technology adoption is multifaceted, incorporating cognitive, emotional, and social factors.

AI technology’s unique traits necessitate new adoption frameworks. Open algorithmic operations increase adoption likelihood by 31% compared to opaque systems, hence trust is important (Araujo et al., 2020). Since 68% of students worry about data safety in educational AI applications (Bond & Bedenlier, 2019), ethical issues are crucial. Collectivist cultures have 19% more social effect on adoption decisions than individualist ones (Nguyen et al., 2023). Adapting AI adoption frameworks to the technology’s unique traits and cultural contexts is necessary.

Empirical study on AI and education shows promise and challenges. Well-deployed AI systems improve learning efficiency by 18-22% over time (Luckin et al., 2016). Meta-analytic evidence indicates particular benefits for personalized learning, with adaptive tutoring systems demonstrating effect sizes of 0.47 on standardized achievement measures (Chen et al., 2022). However, concerning findings have emerged regarding cognitive impacts, with experimental studies showing 12-15% declines in critical thinking metrics among frequent AI tool users (Penglong et al., 2024). Perhaps most significantly, research identifies substantial variation in outcomes based on students’ self-efficacy levels, with high-efficacy students showing 27% greater learning gains compared to their low-efficacy counterparts when using the same AI systems (Alam, 2021). These differential effects underscore the importance of considering individual differences in AI adoption strategies.

Saudi Arabia’s Vision 2030 initiative has positioned the nation as a particularly informative case study of large-scale educational AI implementation. Government investments exceeding $20 billion have created one of the world’s most comprehensive smart education infrastructures (Abulibdeh et al., 2024). Early adoption studies in Saudi universities report promising integration rates (68%) for basic AI tools, though more advanced applications show substantially lower adoption (Abulibdeh et al., 2024). Qualitative research highlights the cultural specificity of adoption patterns, with Islamic values influencing perceptions of appropriate AI use in 43% of student respondents (AlMulhim, 2023). Despite these advancements, significant research gaps persist, particularly regarding: (1) the mediating mechanisms through which self-efficacy influences AI adoption, (2) the long-term cognitive consequences of educational AI use, and (3) the development of culturally appropriate implementation frameworks. The current study addresses these gaps through its integrated theoretical approach and empirical investigation of AI adoption in Saudi classrooms.

2.3. Conceptual Framework

This study uses known theoretical views and contemporary technological variables to rigorously examine the integration of AI technologies in higher education. The framework organises key aspects into three dimensions: antecedent causes, mediating processes, and educational results, providing a comprehensive perspective on AI integration in education. Based on TAM, PU is students’ assessment of AI technologies’ potential to increase learning and academic performance. These technologies’ cognitive efforts and PEOU are strongly related. Based on UTAUT, the social influence element recognises the impact of institutional restrictions, teacher endorsements, and peer behaviours on technology adoption patterns. Two AI-specific components complete the antecedent dimensions: trust in AI systems, which indicates algorithmic reliability and fairness, and ethical concerns, which encompass data protection and AI biases.

According to the social cognitive theory by Bandura (1986), self-efficacy is the main mediator of antecedent components into adoption behaviour. This mediation uses three interwoven channels. The competence route suggests that self-efficacy boosts students’ confidence in their AI product use, which increases PU and uptake. Increased self-efficacy reduces technology-related uneasiness, reducing usability concerns. By increasing AI system readiness, self-efficacy boosts trust and adoption. Therefore, self-efficacy is proposed to mediate the paths of PU, PEOU, and trust in AI and adoption of AI.

The current study also proposed a direct effect between AI adoption and academic outcomes such as academic performance and cognitive skill development. Course grades and memory improve with academic performance. Cognitive skill development improves critical thinking, problem-solving, and creativity. AI integration in education has immediate (academic performance) and long-term consequences (cognitive skill development).

2.4. Perceived Usefulness and Artificial Intelligence Adoption

Based on TAM, PU is a critical factor for driving technology adoption (Davis, 1989). Scherer et al. (2019) found that PU explained 34% of learning technology adoption intentions in a meta-analysis of 161 studies. Alam (2021) found that students who thought AI writing tools improved essay quality were 2.3 times more likely to use them. In Middle Eastern universities, Al-Emran et al. (2020) found that perceived learning enhancement was the strongest predictor of educational technology adoption. Therefore, PU is anticipated to affect positively AI tool adoption.

H1: PU will increase AI tool adoption.

2.5. Perceived Ease of Use and Artificial Intelligence Adoption

Based on TAM and UTAUT, ease of use is an essential factor for promoting technology adoption. Empirical evidence supports the enduring relationship between ease of use and technology adoption, particularly for novel technologies. UTAUT research found ease of use effects were strongest during initial implementation phases (β=0.35) (Venkatesh et al., 2003). In AI-specific research, Araujo et al. (2020) demonstrated that reducing interface complexity increased adoption rates by 28% in their experimental study. These findings are particularly relevant given the technical nature of AI tools. A study by Chen et al. (2020) showed students rated ease of use as their primary concern (72% of respondents) when first encountering AI tutoring systems. Therefore, PEOU is expected to have a positive effect on AI adoption.

H2: PEOU will positively influence AI tool adoption.

2.6. Trust in Artificial Intelligence and Artificial Intelligence Adoption

Trust has emerged as a critical factor in AI adoption due to algorithmic opacity concerns. Warkentin et al. (2002)’s foundational work on technology trust found it explained an additional 18% of variance in adoption beyond TAM factors. In educational AI, Bond and Bedenlier (2019)’s multinational study showed trust was the second strongest predictor (β=0.33) after PU. Experimental research by Araujo et al. (2020) demonstrated that increasing algorithmic transparency boosted trust scores by 41% and subsequent adoption rates by 29%. Therefore, in this study, trust in AI is expected to affect positively the AI adoption.

H3: Trust in AI systems will positively predict adoption of AI tools.

2.7. Social Influence and Artificial Intelligence Adoption

The social dimension of technology adoption has shown particular strength in educational settings. Al-Rahmi et al. (2021)’s study of 783 university students found social influence accounted for 27% of variance in learning technology adoption. Bellaaj et al. (2015) reported social influence effects were 19% stronger than in individualist cultures. Recent AI-specific research by Alangari et al. (2022) in Saudi universities found peer recommendations increased AI tool trial rates by 43%, while instructor endorsements increased sustained usage by 31%.

H4: Social influence will be positively associated with AI tool adoption.

2.8. Ethical Concerns and Artificial Intelligence Adoption

Growing research highlights ethical considerations as adoption barriers for AI in education. A survey by Bond and Bedenlier (2019) found 68% of students expressed significant data privacy concerns with educational AI. A study by Chiu et al. (2023) showed institutions with poor ethical transparency had 37% lower AI adoption rates. In Saudi contexts, AlMulhim (2023)’s qualitative research revealed Islamic ethical considerations influenced 43% of students’ AI tool evaluations, often creating adoption hesitancy. In this study, ethical concern are expected to have a negative effect on AI adoption.

H5: Ethical concerns will negatively affect AI tool adoption.

2.9. Self-Efficacy As a Mediator

Social cognitive theory establishes self-efficacy as critical for converting beliefs into action. A meta-analysis study by Taherdoost (2018) found self-efficacy mediated 22-35% of PU effects across technology studies. In AI education research, the study of Alam (2021) showed that self-efficacy accounted for 28% of the total effect of PU on adoption. This mediation appears particularly strong for complex technologies (Roll & Wylie, 2016). Therefore, this study proposed that self-efficacy mediates the effect of PU on AI adoption.

H6: Self-efficacy will mediate the positive relationship between PU and AI tool adoption.

Research consistently demonstrates self-efficacy’s role in overcoming usability challenges. Compeau and Higgins (1995)’s seminal work showed self-efficacy mediated 41% of ease of use effects. Recent AI studies reveal that this mediation is particularly crucial during initial adoption phases. Chen et al. (2020) found high self-efficacy students overcame early usability barriers 2.1 times faster than low self-efficacy peers. This aligns with the findings of Al-Emran et al. (2020) that self-efficacy mediated 33% of the ease-adoption relationship in Middle Eastern e-learning contexts. Accordingly, the following is proposed:

H7: Self-efficacy will mediate the positive relationship between PEOU and AI tool adoption.

Emerging research identifies self-efficacy as key for translating trust into adoption behaviour. A study by Tapalova and Zhiyenbayeva (2022) demonstrated students with high self-efficacy were 3.2 times more likely to act on their trust in AI systems. Path analysis by Alam (2021) revealed self-efficacy mediated 24% of trust’s total effect on AI adoption. Research by Araujo et al. (2020) showed self-efficacy mediated the effect of trust on technology adoption. Accordingly, the following is proposed.

H8: Self-efficacy will mediate the positive relationship between trust in AI and AI tool adoption.

2.10. Artificial Intelligence Adoption and Educational Outcome

Empirical evidence links educational technology adoption to measurable learning gains. Luckin et al. (2016)’s meta-analysis found proper AI tool use increased learning efficiency by 18-22%. Specific to academic outcomes, Chen et al. (2020) reported a 0.47 effect size on course grades from adaptive tutoring systems. In Saudi contexts, the study of Abulibdeh et al. (2024) showed that AI-adopting classrooms outperformed controls by 11% on standardized exams. Scherer et al. (2019)’s research found adopting students demonstrated 27% higher assignment completion rates. Therefore, it is proposed that using AI will increase the academic performance of students.

H9: Adoption of AI tools will positively influence academic performance.

Research suggests AI tools can enhance higher-order thinking when properly implemented. Huang et al. (2021)’s taxonomy-informed studies show certain AI designs boost analysis and evaluation skills. However, effects depend on usage patterns. Roll and Wylie (2016) found students using AI as a complement for thinking showed 19% greater critical thinking gains. Recent work by Alam (2021) identified an optimal usage threshold (3-5 hours weekly) beyond which cognitive benefits plateaued or declined, suggesting nuanced relationships requiring further investigation. Thus, this study examines the effect of AI adoption on cognitive skill development and proposed a positive relationship.

H10: Adoption of AI tools will positively affect cognitive skill development.

3. RESEARCH METHODOLOGY

This quantitative study employed a cross-sectional survey design to examine the predictors and consequences of AI tool adoption among university students in Saudi Arabia. The target population comprised undergraduate students enrolled in public universities across Saudi Arabia. A multi-stage sampling approach was implemented to ensure representative geographical coverage and disciplinary diversity. First, four universities were purposively selected from different regions. These institutions were chosen based on their regional distribution, student population size, and established e-learning infrastructure. Within each university, stratified random sampling was employed to select participants proportionally across academic disciplines (humanities, health sciences, and business) and year of study (1st to 4th year). The sample size was determined using Cochran’s formula for finite populations, targeting a 95% confidence level with a 5% margin of error. This approach yielded a required sample of 1,200 participants, with 300 students recruited from each university.

The study utilized a structured questionnaire developed through an extensive four-phase process. The questionnaire items were adapted from validated instruments in technology acceptance literature while incorporating AI-specific constructs. The survey comprised six sections. The first is the demographic information and it includes three questions regarding age, gender, and major. The second section include the variables of the study. PU was measured using six items, and PEOU was measured using five items adopted from Davis (1989). Social influence includes four items and was adopted from Venkatesh et al. (2003). For trust in AI, it was adopted from Lian (2015) while measurement of ethical concern was adopted from Bond and Bedenlier (2019). Further, the adoption of AI was adopted from Venkatesh et al. (2003). Measurement of self-efficacy was adopted from Alam (2021) while measurement of academic performance was adopted from Chen et al. (2022) and measurement of cognitive skill development (five items) was adopted from Kayali and Alaaraj (2020).

The second stage included the validation of the instrument. The initial English version was reviewed by a panel of five experts. The content validity index was calculated, with all scales exceeding the recommended threshold of 0.80. Experts provided feedback on item clarity, relevance, and cultural appropriateness. The third stage included translation of the questionnaire. The questionnaire underwent rigorous translation into Arabic following the forward-backward translation protocol. Two bilingual academics independently translated the instrument, followed by reconciliation of discrepancies. The fourth state was the pilot testing. The Arabic questionnaire was pilot tested with 40 students (10 from each university). Reliability analysis showed excellent internal consistency (CA ranging from 0.83 to 0.92 across scales). Minor adjustments were made to improve item clarity based on pilot feedback.

Data collection occurred over an 8-week period (between November 2024 and January 2025) through an online survey platform. The researcher coordinated with university administrators to distribute the survey through official channels. A link was sent to the students, and they were reminded weekly. The final sample included 357 completed responses, representing a 29.7% response rate. The sample characteristics mirrored the target population in terms of gender distribution, academic level, and discipline representation.

A comprehensive data cleaning protocol was implemented. Cases with more than 20% missing items (n=18) were excluded. For remaining missing values, multiple imputation using chained equations was employed. Little’s missing completely at random (MCAR) test indicated the data was MCAR (χ2=32.18, p=0.14). The outliers was examined, and this resulted in removing 22 responses. Multivariate outliers were identified using Mahalanobis distance (p<0.001). After verifying these were legitimate responses, extreme values were winsorized to the 95th percentile. All variables showed acceptable skewness (<|1.0|) and kurtosis (<|1.0|). Variance inflation factors ranged from 1.12 to 2.87, well below the threshold of 5, indicating no concerning multicollinearity among predictors. Comparison of early (n=192) and late respondents (n=125) revealed no significant differences in demographic characteristics or scale scores (all p>0.05), suggesting minimal non-response bias. In total 317 responses were valid and complete.

4. FINDINGS

4.1. Descriptive Information

The anticipated samples of 317 students from four Saudi universities are projected to show balanced demographic distribution, with 48% female representation and proportional distribution across academic years and majors. All the respondents were under 25 years of age, and this is in line with this study which investigates undergraduate students. Initial variable analysis suggested moderate-to-high scores for PU (mean [M]=3.82, standard deviation [SD]=0.73), PEOU (M=3.71, SD=0.81), and social influence (M=3.62, SD=0.81), with trust in AI systems showing more modest levels (M=3.33, SD=0.93). Ethical concerns demonstrated significant variability (M=3.51, SD=1.0), reflecting divergent student attitudes toward AI adoption. The adoption variable showed a mean score of 3.2 (M=3.22, SD=0.83), indicating most students engage with AI tools occasionally rather than extensively. In addition, the self-efficacy showed a moderate level of 3.1 (M=3.1, SD=0.5). Similarly, the educational outcome such as academic performance showed a moderate level of 3.11 (M=3.11, SD=0.31) and 3.19 for cognitive skills development (M=3.19, SD=0.53).

4.2. Measurement Model

Based on the recommendation of Hair et al. (2023), the first stage in evaluating the measurement model is to assess the factor loading. All the factor loading showed acceptable levels except for item 1 from ethical concerns, and item 3 from cognitive skill development, as well as item 3 from self-efficacy. As shown in Table 1 (Alam, 2021; Bond & Bedenlier, 2019; Chen et al., 2022; Davis, 1989; Kayali & Alaaraj, 2020; Lian, 2015; Venkatesh et al., 2003), the factor loading of all items including the deleted items is given.

Table 1

Factor loading

| Construct | Item code | Item statement | Source | Status | Factor loading |

|---|---|---|---|---|---|

| Perceived usefulness | PU1 | Using AI improves my academic performance | Davis (1989) | Retained | 0.75 |

| PU2 | AI helps me complete academic tasks more efficiently | Retained | 0.91 | ||

| PU3 | AI tools enhance the quality of my academic work | Retained | 0.85 | ||

| PU4 | Using AI is beneficial for my learning outcomes | Retained | 0.81 | ||

| PU5 | AI usage contributes positively to my academic success | Retained | 0.74 | ||

| PU6 | AI tools are a valuable asset in my education | Retained | 0.79 | ||

| Perceived ease of use | PEOU1 | AI tools are easy to use | Davis (1989) | Retained | 0.73 |

| PEOU2 | Learning to use AI tools is easy for me | Retained | 0.88 | ||

| PEOU3 | I find AI tools to be user-friendly | Retained | 0.81 | ||

| PEOU4 | Interacting with AI tools does not require a lot of effort | Retained | 0.84 | ||

| PEOU5 | It is easy for me to become skilful at using AI tools | Retained | 0.76 | ||

| Social influence | SI1 | People important to me think I should use AI tools | Venkatesh et al. (2003) | Retained | 0.91 |

| SI2 | Instructors expect me to use AI tools in learning | Retained | 0.87 | ||

| SI3 | My peers think using AI tools is beneficial | Retained | 0.71 | ||

| SI4 | I feel social pressure to use AI tools | Retained | 0.72 | ||

| Trust in AI | TAI1 | I trust the decisions made by AI tools in academic settings | Lian (2015) | Retained | 0.73 |

| TAI2 | AI systems used in education are reliable | Retained | 0.73 | ||

| TAI3 | I believe AI tools make fair academic suggestions | Retained | 0.79 | ||

| TAI4 | I am confident in the accuracy of AI tools | Retained | 0.77 | ||

| Ethical concerns | EC1 | I worry AI might misuse my data | Bond and Bedenlier (2019) | Deleted | 0.53 |

| EC2 | AI tools might breach student privacy | Retained | 0.82 | ||

| EC3 | AI in education may cause ethical issues | Retained | 0.79 | ||

| EC4 | The use of AI might result in unfair grading | Retained | 0.73 | ||

| Self-efficacy | SE1 | I am confident in my ability to use AI tools effectively | Alam (2021) | Retained | 0.75 |

| SE2 | I can complete tasks using AI without help | Retained | 0.77 | ||

| SE3 | I feel confident troubleshooting AI tools when issues arise | Deleted | 0.56 | ||

| SE4 | I am capable of learning new AI features | Retained | 0.78 | ||

| SE5 | I feel well-prepared to use AI tools in my studies | Retained | 0.79 | ||

| Academic performance | AP1 | My academic results have improved since using AI tools | Chen et al. (2022) | Retained | 0.81 |

| AP2 | AI tools help me complete academic assignments more effectively | Retained | 0.76 | ||

| AP3 | I score better on tests when I use AI tools | Retained | 0.81 | ||

| AP4 | I meet academic deadlines better with the help of AI tools | Retained | 0.77 | ||

| Cognitive skill development | CSD1 | AI tools help improve my problem-solving skills | Kayali & Alaaraj (2020) | Retained | 0.79 |

| CSD2 | I use AI tools to enhance my critical thinking | Retained | 0.91 | ||

| CSD3 | AI helps enhance my analytical and critical thinking skills | Deleted | 0.51 | ||

| CSD4 | I use AI to explore complex ideas in my field | Retained | 0.87 | ||

| CSD5 | AI contributes to my creative thinking in academic work | Retained | 0.73 | ||

| Adoption of AI | AI1 | I regularly use AI tools for my studies | Venkatesh et al. (2003) | Retained | 0.87 |

| AI2 | I integrate AI tools into my academic routines | Retained | 0.91 | ||

| AI3 | I prefer to use AI tools when available | Retained | 0.88 | ||

| AI4 | AI tools are a regular part of my learning process | Retained | 0.89 | ||

| AI5 | I rely on AI tools to complete academic tasks | Retained | 0.86 |

As shown in Table 2, measurement model results confirm the robustness of the theoretical constructs. All scales demonstrate strong reliability by having Cronbach’s alpha (CA) and composite reliability above 0.70 with particularly good performance from the self-efficacy measures. The convergent validity was achieved because the value of average variance extracted is above 0.50, as shown in Table 2.

Table 2

Reliability and validity

| Variable | Cronbach’s alpha | Composite reliability | Average variance extracted |

|---|---|---|---|

| Academic performance | 0.840 | 0.874 | 0.585 |

| Adoption of AI | 0.859 | 0.860 | 0.842 |

| Cognitive skill development | 0.851 | 0.858 | 0.841 |

| Ethical concern | 0.731 | 0.747 | 0.531 |

| Perceived ease of use | 0.851 | 0.852 | 0.872 |

| Perceived usefulness | 0.838 | 0.841 | 0.802 |

| Self-efficacy | 0.926 | 0.935 | 0.599 |

| Social Influence | 0.916 | 0.920 | 0.749 |

| Trust in AI | 0.883 | 0.883 | 0.881 |

The discriminant validity was assessed using heterotrait-monotrait ratio of correlations (HTMT)’s correlation in which the correlation among variables was less than 0.85, indicating that there is no issues of discriminant validity. These psychometric results give confidence in the quality of the measurement approach and the meaningfulness of subsequent hypothesis tests.

4.3. Structural Model

In accordance with recent partial least square-structural equation modeling (PLS-SEM) evaluation standards (Hair et al., 2023), the model fit was assessed using several global fit indices. The standardized root mean square residual value was 0.056, which is below the recommended threshold of 0.08, indicating a good overall model fit. The normed fit index was 0.91, which also meets the minimum criterion of 0.90, further supporting model adequacy. Additionally, the discrepancy measures d_ULS and d_G were 0.225 and 0.193, respectively, and fall within acceptable limits for PLS-SEM analysis, indicating no significant model misfit. Although Chi-square (χ2=1,985.56) is reported, it is primarily descriptive in the context of PLS-SEM and not interpreted in the same way as in covariance based-structural equation modeling. These indicators collectively demonstrate that the structural model exhibits an acceptable fit with the empirical data, supporting the robustness of the hypothesized relationships. Table 3 (Hair et al., 2023) shows the results of testing the model fit for the structural model.

Table 3

Model fit indices for the structural model

| Model fit index | Value | Recommended threshold | Reference | Interpretation |

|---|---|---|---|---|

| Standardized root mean square residual | 0.056 | <0.08 | Hair et al. (2023) | Good model fit |

| Normed fit index | 0.91 | >0.90 | Hair et al. (2023) | Acceptable fit |

| d_ULS (unweighted least squares discrepancy) | 0.225 | As low as possible | Hair et al. (2023) | Acceptable |

| d_G (geodesic discrepancy) | 0.193 | As low as possible | Hair et al. (2023) | Acceptable |

| Chi-square (χ2) | 1,985.56 | Not significant preferred | Hair et al. (2023) | Descriptive only in PLS-SEM |

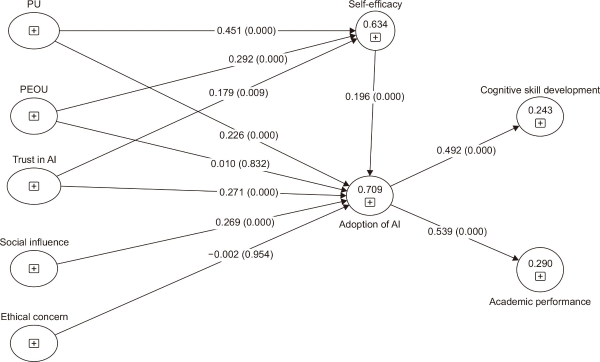

The structural model was assessed by examining the R-square (R2) and F-square (F2) as well as the path coefficient. As can be seen in Table 4, the R-square of AI adoption is 0.695, indicating that the five predictors can explain 69.5% of the variation in AI adoption. AI adoption can explain 29% of academic performance and 24.2% of cognitive skill development. After including the mediator self-efficacy, the R-square of self-efficacy as shown in Fig. 1 accounted to 63.4%. The R-square of the adoption of AI increased to 70.9% while the academic performance remained the same at 29% and increased to 24.3% for the cognitive skill development. The F-square for all significant paths are above 0.02 while for non-significant paths, they are less than 0.02.

Table 4

Result of hypotheses testing

| Hypotheses | Path | B | Std. | T | p-value | F2 | R2 |

|---|---|---|---|---|---|---|---|

| H1 | PU→Adoption of AI | 0.308 | 0.049 | 6.276 | <0.05 | 0.12 | 0.695 |

| H2 | PEOU→Adoption of AI | 0.062 | 0.050 | 1.249 | 0.212 | 0.01 | |

| H3 | Trust in AI→Adoption of AI | 0.298 | 0.066 | 4.508 | <0.05 | 0.11 | |

| H4 | Social influence→Adoption of AI | 0.287 | 0.062 | 4.588 | <0.05 | 0.10 | |

| H5 | Ethical concern→Adoption of AI | 0.014 | 0.030 | 0.460 | 0.645 | 0.00 | |

| H6 | PU→Self-efficacy→Adoption of AI | 0.088 | 0.025 | 3.488 | <0.001 | 0.03 | 0.709 |

| H7 | PEOU→Self-efficacy→Adoption of AI | 0.057 | 0.020 | 2.895 | 0.004 | 0.02 | |

| H8 | Trust in AI→Self-efficacy→Adoption of AI | 0.035 | 0.018 | 1.931 | 0.054 | 0.01 | |

| H9 | Adoption of AI→Academic performance | 0.539 | 0.028 | 19.482 | <0.05 | 0.21 | 0.290 |

| H10 | Adoption of AI→Cognitive skill development | 0.492 | 0.052 | 9.548 | <0.05 | 0.19 | 0.242 |

Fig. 1

Structural model. PU, perceived usefulness; PEOU, perceived ease of use; AI, artificial intelligence.

Based on the direct effect model and the mediating effect model, Table 4 shows the results of testing the hypotheses. H is the hypothesis, B is the coefficient, Std. is the standard deviation, T is the T-value, p is the p-value, F2 is the effect size or F-square, and R2 is the R-square.

Our analysis supports seven of the ten hypotheses. PU has a strong positive effect on AI adoption (B=0.308, p<0.05) indicating that H1 is supported. The second hypothesis is rejected (H2), which is related to the effect of PEOU on adoption of AI. Trust in AI has a strong effect on the adoption of AI (B=0.298, p<0.05). Thus, H3 is supported. For H4, it is supported because the social influence significantly predicted the adoption of AI (B=0.287, p<0.05). For H5, it is rejected. Ethical concerns have no effect on adoption of AI. The non-significant effect of ethical concerns suggests students may compartmentalize these worries when making practical adoption decisions, prioritizing immediate academic benefits over longer-term ethical considerations.

In terms of the mediation role of self-efficacy, it was confirmed with PU and PEOU but not with trust in AI. This indicates that part of the relationship between PU and PEOU can be explained by self-efficacy while the self-efficacy has no role between trust in AI and adoption of AI. The mediation effects of self-efficacy are confirmed for PU and ease of use, highlighting students’ confidence as a key mechanism translating beliefs into adoption behaviour. Therefore, H6 and H7 are supported while H8 is rejected. The lack of mediation for trust through self-efficacy indicates these constructs operate independently in this sample. Students’ trust in AI systems appears unrelated to their confidence in using them. For the effect of AI adoption on educational outcome, it has a significant effect on academic performance (B=0.539, p<0.05) and cognitive skill development (B=0.492, p<0.05). Therefore, H9 and H10 are supported.

5. DISCUSSION

This study’s findings offer important theoretical contributions to technology acceptance literature in several ways. The supported hypotheses generally validate the applicability of TAM in AI adoption contexts within Saudi higher education. The strong predictive power of PU aligns with Davis (1989)’s original propositions, suggesting these core TAM constructs remain relevant for emerging educational technologies. In addition, social influence as a significant predictor coordinates with conventional wisdom from UTAUT (Venkatesh et al., 2003). This finding suggests that social norms may operate similarly in Saudi academic environments compared to Western contexts where UTAUT was developed.

The rejection of hypotheses related to PEOU and ethical concerns warrants deeper theoretical reflection. The non-significant effect of PEOU suggests that, in the Saudi higher education context, students may already possess sufficient digital literacy and exposure to technology, which reduces the salience of usability issues. This aligns with findings from studies in digitally advanced societies, where once baseline technological competence is achieved, ease of use becomes less critical than PU or social endorsement in predicting adoption. Regarding ethical concerns, the absence of significant influence may reflect a combination of cultural and contextual factors. Students may prioritize the immediate academic benefits of AI tools over abstract ethical risks, particularly when trust in state institutions and regulatory frameworks is high. Additionally, cultural and religious values, such as reliance on institutional authority and Islamic ethical orientations, may mitigate individual apprehensions about privacy and algorithmic fairness. These insights suggest that while global AI ethics debates are prominent, in practice, students in this context tend to compartmentalize such concerns, giving precedence to pragmatic educational gains.

The dismissal of ethical concerns by students, despite the prominence of global debates on AI ethics, requires a nuanced interpretation. In the Saudi context, several interrelated factors may account for this pattern. First, cultural norms and the collectivist orientation of society may lead students to defer to institutional and governmental oversight, assuming that AI systems deployed in universities are already aligned with ethical and legal standards. Second, religious and moral frameworks, particularly Islamic digital ethics, emphasize trust in authority and compliance with institutional rules, which may reduce the salience of individual-level ethical questioning. Third, the relatively low awareness of technical risks, such as data privacy breaches or algorithmic bias, may limit students’ ability to critically evaluate AI systems. Finally, the pragmatic orientation of students, who are more concerned with immediate academic benefits, further explains why ethical issues were deprioritized. These findings suggest that ethical awareness campaigns, integrated into digital literacy and AI literacy programs, could help students better engage with the ethical dimensions of AI in education while maintaining trust in institutional safeguards.

The significant mediating role of self-efficacy (H6, H7) extends Bandura (1986)’s social cognitive theory by demonstrating its relevance for AI technology adoption. However, the lack of mediation for trust (H8) suggests that confidence in AI systems may function differently than trust in human instructors or conventional technologies, highlighting a need for theoretical refinement regarding trust mechanisms in AI-enabled learning environments. The non-significant effect of ethical concerns contradicts much contemporary AI ethics literature, possibly indicating a disconnect between abstract ethical considerations and practical adoption decisions among students.

6. IMPLICATIONS

This study improves theoretical understanding of educational technology adoption. It initially strengthens TAM by confirming its core concepts and defining key AI adoption boundaries. The strong predictive power of PU supports TAM, proving its relevance for advanced technology such as AI. The non-significant effect of PEOU challenges the TAM assumptions, suggesting that adoption decisions may be less influenced by ease of use as users gain technical proficiency.

The present research improves theoretical understanding by including AI trust as a major predictor, surpassing traditional TAM components. This supports earlier work calling for models that account for AI systems’ unique traits, notably their opaque decision-making processes. Human-AI interactions require innovative theoretical frameworks to explain trust formation since users must rely on system outputs without understanding the algorithms. The significant impact of social influence supports UTAUT theory in collectivist education. This finding suggests that social norms may similarly affect technology adoption in numerous cultures, even though reference groups (e.g., peers versus instructors) and their proportionate effect may differ. Ethics’ limited impact weakens assumptions in AI ethics literature, demonstrating a disjunction between theoretical ethical norms and implementation choices that requires additional theoretical research.

Beyond validating established frameworks such as TAM, UTAUT, and social cognitive theory, this study highlights the need for incorporating additional constructs that capture the unique challenges of AI adoption in educational contexts. Future research could integrate variables such as techno-stress, which reflects the psychological strain arising from overexposure to AI-driven platforms, and AI literacy, which measures students’ ability to critically understand and evaluate AI systems. Both constructs are increasingly relevant as universities adopt advanced digital infrastructures. Similarly, resistance to change may offer explanatory power in contexts where traditional learning methods remain dominant and cultural norms shape technology acceptance. Embedding these constructs into future models would not only extend theoretical novelty but also create a culturally adaptive framework tailored to Middle East and North Africa (MENA) education systems, thereby advancing the literature beyond the boundaries of classical adoption theories.

The findings reveal many AI adoption measures for institutions. Institutions must emphasise academic benefits while using AI technology since PU affects acceptance. This may include discipline-specific AI application presentations, peer demonstration programs where early adopters discuss their discoveries, and practical demonstrations of how AI tools may improve learning. AI systems need trust, therefore universities must build it. Transparency standards for institutional AI tools, clear descriptions of AI system capabilities and constraints, and outlets for student algorithmic concerns are practical ways. These methods may help pupils trust AI in education. The partial mediation of self-efficacy underlines the necessity for AI technology instruction to increase student confidence. Institutions should offer incremental skill-building programs like practical workshops and supervised practice instead of only technical instruction. Customised programs must focus on how AI may improve academic learning. Although ethical concerns did not significantly affect adoption decisions, student answers show that institutions should not ignore ethical education. Institutions should incorporate AI ethics into digital literacy courses, create academic AI use norms, and host ethical discussions on AI in education. AI adoption positively impacts academic achievement and cognitive skill development, suggesting that institutions should proactively integrate AI into curriculum to optimise learning. This may entail revising exams to reflect AI-enhanced skills, giving faculty development on AI pedagogy, and helping teachers integrate AI technology to maximise educational advantages.

7. CONCLUSION

This study advances our understanding of AI adoption in higher education by validating core technology acceptance principles, while identifying important cultural specificities in the Saudi context. The findings suggest that while traditional adoption factors remain relevant, the unique characteristics of AI technologies and local educational cultures require theoretical refinement and tailored implementation approaches. By addressing the identified limitations in future research, scholars can develop more comprehensive models of educational technology adoption in the AI era. For practitioners, the results highlight both opportunities and challenges in leveraging AI tools to enhance teaching and learning outcomes. The findings of this study have several limitations. The focus on the Saudi context limits the findings to Saudi Arabia and undergraduate students.

Another key limitation of this study lies in the measurement of academic performance and cognitive skills, which relied exclusively on self-reported data. While self-assessments provide valuable insights into students’ perceptions, they are inherently subjective and may be influenced by social desirability bias or limited self-awareness. The absence of objective performance indicators, such as grade point average records, standardized test scores, or instructor evaluations, reduces the robustness of outcome assessment. Future research should aim to triangulate self-reports with objective academic data and faculty assessments, thereby enhancing measurement validity and allowing for a more comprehensive evaluation of the educational impact of AI adoption.

A further limitation concerns the scope of the sample, which was restricted to undergraduate students in public universities. While this focus ensured a clear and context-specific understanding of AI adoption in Saudi higher education, it limits the generalizability of the findings. Patterns of adoption may differ in private universities, where resources and institutional policies may be more flexible, or among graduate students, who often engage with AI in more specialized and research-intensive ways. Moreover, the exclusive national focus does not capture potential cross-cultural variations across MENA or other global contexts. Future studies should therefore broaden the sampling frame to include private institutions, graduate cohorts, and cross-national comparisons in order to validate and extend the present findings.

Future studies should examine whether these patterns hold in different national contexts. This study adopted a cross-sectional design. Therefore, it cannot capture how adoption patterns evolve over time. Further studies are recommended to conduct longitudinal research because it could reveal important developmental trajectories in AI tool usage. In term of the measurements of the variables, academic performance can be measured objectively or subjectively. Similarly, measures of cognitive skill development relied on self-reports rather than objective assessments. Future work should incorporate standardized academic and cognitive tests. The focus on public universities may not reflect patterns at private institutions. Additionally, graduate students were not included in the sample. Future research should investigate why ease of use is less impactful in this context compared to Western studies. In addition, future studies can examine the disconnect between ethical concerns and adoption behaviour. Furthermore, future studies should explore instructor perspectives on AI adoption to complement student data. Additional variables can be added as moderators or mediators. These include the AI literacy, perceived risk, technostress, and IT knowledge of students and governmental policies to support the adoption of AI and its impact on educational outcomes.

REFERENCES

, , (2024) Navigating the confluence of artificial intelligence and education for sustainable development in the era of industry 4.0: Challenges, opportunities, and ethical dimensions Journal of Cleaner Production, 437, 140527 https://doi.org/10.1016/j.jclepro.2023.140527.

, , (2020) An empirical examination of continuous intention to use m-learning: An integrated model Education and Information Technologies, 25, 2899-2918 https://doi.org/10.1007/s10639-019-10094-2.

, , , , , (2021) Exploring the factors affecting mobile learning for sustainability in higher education Sustainability, 13, 7893 https://doi.org/10.3390/su13147893.

(2021) Paper presented at 2021 International Conference on Computational Intelligence and Computing Applications (ICCICA) Nagpur, India Possibilities and apprehensions in the landscape of artificial intelligence in education, https://doi.org/10.1109/ICCICA52458.2021.9697272

, , , , , (2022) Developing a blockchain-based digitally secured model for the educational sector in Saudi Arabia toward digital transformation PeerJ Computer Science, 8, e1120 https://doi.org/10.7717/peerj-cs.1120. Article Id (pmcid)

, , , , , , , , , , , , , , (2023) Artificial intelligence and patient autonomy in obesity treatment decisions: An empirical study of the challenges Cureus, 15, e49725 https://doi.org/10.7759/cureus.49725. Article Id (pmcid)

(2023a) Adoption of IoT by telecommunication companies in GCC: The role of blockchain Decision Science Letters, 12, 55-68 https://doi.org/10.5267/j.dsl.2022.10.006.

(2023b) Cloud computing usage by governmental organizations in Saudi Arabia based on Vision 2030 Uncertain Supply Chain Management, 11, 169-178 https://doi.org/10.5267/j.uscm.2022.10.010.

(2024) The impact of artificial intelligence on business performance in Saudi Arabia: The role of technological readiness and data quality Engineering, Technology & Applied Science Research, 14, 16802-16807 https://doi.org/10.48084/etasr.7871.

, (2024) An artificial neural network prediction model of GFRP residual tensile strength Engineering, Technology & Applied Science Research, 14, 18277-18282 https://doi.org/10.48084/etasr.9107.

(2023) The impact of administrative management and information technology on e-government success: The mediating role of knowledge management practices Cogent Business & Management, 10, 2202030 https://doi.org/10.1080/23311975.2023.2202030.

, , , (2020) In AI we trust? Perceptions about automated decision-making by artificial intelligence AI & SOCIETY, 35, 611-623 https://doi.org/10.1007/s00146-019-00931-w.

, , , , , (2024) Assessing the acceptance for implementing artificial intelligence technologies in the governmental sector: An empirical study Engineering, Technology & Applied Science Research, 14, 18160-18170 https://doi.org/10.48084/etasr.8711.

, (2019) Facilitating student engagement through educational technology: Towards a conceptual framework Journal of Interactive Media in Education, 1, 1-14 https://doi.org/10.5334/jime.528.

, , (2020) Artificial intelligence in education: A review IEEE Access, 8, 75264-75278 https://doi.org/10.1109/ACCESS.2020.2988510.

, , , , (2023) Systematic literature review on opportunities, challenges, and future research recommendations of artificial intelligence in education Computers and Education: Artificial Intelligence, 4, 100118 https://doi.org/10.1016/j.caeai.2022.100118.

, (1995) Computer self-efficacy: Development of a measure and initial test MIS Quarterly, 19, 189-211 https://doi.org/10.2307/249688.

(2025) The interplay of learning, analytics and artificial intelligence in education: A vision for hybrid intelligence British Journal of Educational Technology, 56, 469-488 https://doi.org/10.1111/bjet.13514.

(1989) Perceived usefulness, perceived ease of use, and user acceptance of information technology MIS Quarterly, 13, 319-340 https://doi.org/10.2307/249008.

, , (2021) A review on artificial intelligence in education Academic Journal of Interdisciplinary Studies, 10, 206 https://doi.org/10.36941/ajis-2021-0077.

(2015) Critical factors for cloud based e-invoice service adoption in Taiwan: An empirical study International Journal of Information Management, 35, 98-109 https://doi.org/10.1016/j.ijinfomgt.2014.10.005.

, , (2024) Uncovering blind spots in education ethics: Insights from a systematic literature review on artificial intelligence in education International Journal of Artificial Intelligence in Education, 34, 1166-1205 https://doi.org/10.1007/s40593-023-00384-9.

, , , , (2023) Ethical principles for artificial intelligence in education Education and Information Technologies, 28, 4221-4241 https://doi.org/10.1007/s10639-022-11316-w. Article Id (pmcid)

, , , (2024) Impact of artificial intelligence usage and technology competence on competitive advantage with mediating role of effective information management system Profesional de la información, 33, e330501 https://doi.org/10.3145/epi.2024.ene.0501.

, (2016) Evolution and revolution in artificial intelligence in education International Journal of Artificial Intelligence in Education, 26, 582-599 https://doi.org/10.1007/s40593-016-0110-3.

, , , , , , (2024) Unveiling the landscape of generative artificial intelligence in education: A comprehensive taxonomy of applications, challenges, and future prospects Education and Information Technologies, 30, 3239-3278 https://doi.org/10.1007/s10639-024-12936-0.

, , (2019) The technology acceptance model (TAM): A meta-analytic structural equation modeling approach to explaining teachers' adoption of digital technology in education Computers & Education, 128, 13-35 https://doi.org/10.1016/j.compedu.2018.09.009.

, , (2024) Application of artificial intelligence in the identification of Banana Bunch Top Virus (BBTV) in Mozambique Engineering, Technology & Applied Science Research, 14, 18734-18740 https://doi.org/10.48084/etasr.7442.

(2018) A review of technology acceptance and adoption models and theories Procedia Manufacturing, 22, 960-967 https://doi.org/10.1016/j.promfg.2018.03.137.

, (2022) Artificial intelligence in education: AIEd for personalised learning pathways Electronic Journal of e-Learning, 20, 639-653 https://doi.org/10.34190/ejel.20.5.2597.

, , , (2002) Encouraging citizen adoption of e-government by building trust Electronic Markets, 12, 157-162 https://doi.org/10.1080/101967802320245929.